When people talk about AI sandboxes today, they usually mean:

- seccomp, seatbelt, or bubblewrap

- containers built from namespace mappings, cgroups, and allowlists

- hand-tuned profiles bolted onto the existing OS

- some assemblage of the above

These are all useful tools. But none of them were built for agentic AI security, and every single one of them inherits the same original sin: ambient authority.

You might be familiar with that term from Mark Miller's work on capability security. It's become particularly important in our new AI-inflected security era, because it describes the default condition of every modern runtime.

A given process inherits whatever permissions its execution environment happens to provide, which might include filesystem access, network egress, the developer's git credential, an AWS API key sitting in an environment variable, the identity of the user who launched the shell...

The list goes on.

No one intentionally granted that authority, and the process never asked for it. It is simply there.

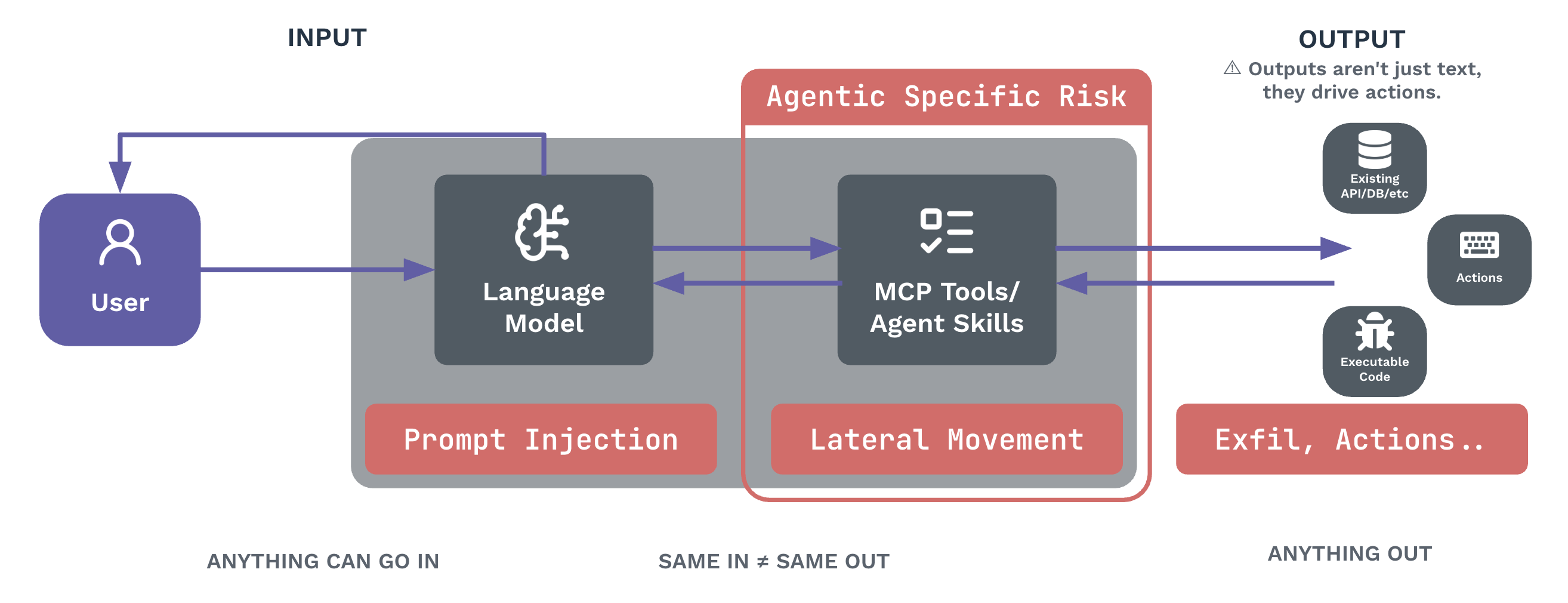

When the process in question is a deterministic, human-authored binary, ambient authority is (arguably, sometimes) a risk that you can manage with audits and reviews. But today, developers are using AI tools like agents and LLM CLIs on their workstations, and these processes inherit the developers’ identity, capabilities, authority, and context. When you put agents and non-deterministic workflows in the mix, ambient authority creates an intolerable attack surface.

The problem here is an entire paradigm that violates the principle of least authority: the notion that every process should hold the minimum authority required to do its job, and nothing more. Modern runtimes turn that principle on its head, making authority the default and restriction the exception.

Conventional sandboxing security stacks try to whittle the attack surface down. You map the LLM into a constrained namespace. You give it network isolation through bubblewrap. You write allowlists for which sockets it can open and which hosts it can reach. You run it as a different Linux user.

Now you spend your time playing whack-a-mole: a new exfiltration path opens up and you patch it; a new credential vector appears and you patch that too. You spend just as much time maintaining and patching the long tail of containerized dependencies in your distribution, even if they are not formal requirements of your use case.

An engineer I spoke with recently calls this the cartographer's dilemma. The reference is to a commission to map a region perfectly, every line and boundary precisely traced. The work is impossible. The territory keeps moving, and the lines are never quite right. That is the trap of any allow-by-default control system layered onto a runtime that grants authority by default. You are mapping a coastline that keeps shifting, and the LLM is happy to walk along it until it finds an unmapped cove.

AI agent security from first principles

The alternative is to start from zero authority and add capabilities only where they are explicitly granted.

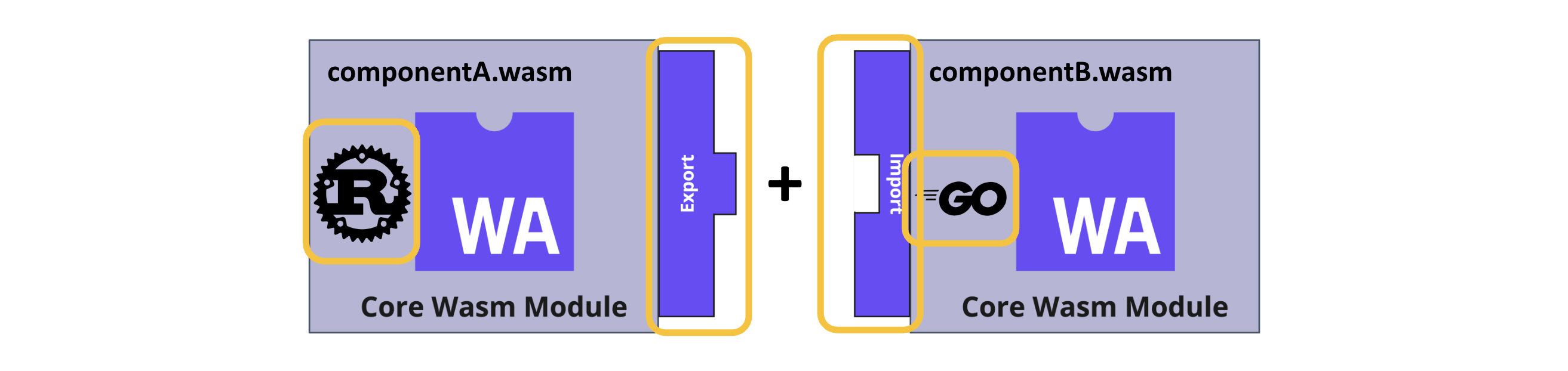

This is what WebAssembly and WASI give you out of the box. A Wasm component begins with no filesystem, no network, no system calls, no environment variables, and no visibility into the host. Any capability it possesses must be declared as a typed import in the component's interface, and the host manages the capability grant.

This is Miller's object-capability model expressed as a runtime: the reference is the permission, and a component that holds no reference can reach no resource.

In plain language: a Wasm component has exactly the authority it needs to do its job, and nothing else. The principle of least authority becomes a runtime guarantee.

For AI agent access control, there are two critical implications.

First, capability grants can be virtualized. When you give a Wasm component a filesystem capability, you are not handing it /etc and walking away. You are handing it an interface, and the host backs that interface with whatever it wants to back it with: a real directory, an in-memory tmpfs, a per-session blob store, or a synthetic view assembled from a database.

The component cannot tell the difference. It cannot escape the abstraction. This is what makes filesystem isolation in a Wasm sandbox fundamentally different from a chroot or a bind mount. The boundary is a typed interface rather than a path on disk.

Second, capability grants compose. A component does not import "the network." It imports wasi:http with the specific shape of HTTP traffic it is allowed to handle. It imports wasi:keyvalue with a specific bucket. Every capability is named, scoped, and reviewable. There is nothing ambient. There is nothing inherited. There is only what was passed in.

That is the substrate. Now we can talk about what runs on top of it.

AIOps and authorship of intent

Engineers working with LLMs are becoming authors of intent.

While they may no longer write the code, they are still accountable for the outcomes of what their software does: correctness, security, cost, maintainability. The shape of the work has changed, but the contract has not.

What is missing is an operational framework that captures that intent, plans against it, executes within bounds you can describe in advance, and produces an audit trail that closes the loop back to the original ask.

That framework is what I am calling AIOps. AIOps is the operational substrate for governing autonomous coding work end-to-end. Better IDEs and better agentic harnesses live inside it. The framework is broader than either, and it has a shape:

Intent capture

Work begins from a GitHub issue, a Slack message, an email, or some other trigger. The original expression of intent is the artifact you plan against, and it is the artifact you eventually compare results back to.

Classification and plan extraction

A component reads the intent and produces a structured plan: what the steps are, what each step needs to touch, and what would constitute success. The plan is reviewable before any model runs against it.

Policy-based scheduling

Each step in the plan gets routed to the right model based on cost, security posture, resilience, and performance. A cheap step does not need a frontier model. A sensitive step does not run against a vendor your policy excludes. The router is model-agnostic by design, so the matching is a policy decision rather than a vendor lock-in.

Bounded execution

Every step runs in a Wasm sandbox with a capability grant scoped to that step. The component for a step that reads from a database has a database capability and nothing else. The component for a step that writes a file has a filesystem capability scoped to a per-session directory and nothing else. The principle of least authority is enforced by the runtime.

Validation and iteration

This is a loop, never a one-shot. Outputs are checked against the plan. Failures route back through the loop with the context of what went wrong. The user in the loop reviews the plan and only inspects diffs when the higher-order artifacts already say something is wrong.

Observability and audit

Every model invocation, every capability use, and every state transition produces a structured trace. You are not sending work into the void and hoping it returns. You are running an instrumented pipeline, and the trace is the receipt.

The governance properties fall out of the shape rather than being layered on. A plan that violates policy fails before a model is invoked. A step that tries to reach a capability it was not granted fails at the runtime boundary. A run that costs more than its budget hits a hard limiter. None of this requires after-the-fact monitoring to enforce. It simply requires the runtime to mean what it says. That’s exactly what a Wasm component runtime is designed to do.

Agentic AI security and outputs

The six stages above describe the governance path. But what do the governed agents actually do? Agent outputs tend to fall into one of three shapes, and each shape demands a different grant.

Producing artifacts

Most coding work ends in some sort of artifact, such as a signed image that could be executed. The artifact will be acted on later, by a different system, under a different identity. The capability grant for this kind of step could be a filesystem scoped to a build output directory, optionally a signing key scoped to a single artifact, or a registry push capability scoped to one package. Nothing about producing a manifest gives a step the right to run that manifest, to push outside its namespace, or to sign anything else.

Acting on existing systems

Some steps cause a side effect in the world. Running a piece of code in a runner. Writing to a database. Calling an API. Mutating a configuration store. The capability shape here is the most varied because the systems being acted on are varied: wasi:http with an egress allow-list and required headers; wasi:keyvalue with a specific bucket and rate limit; a typed RPC handle for an internal service; wasi:filesystem with a per-session path. Each grant is named, scoped, and reviewable before the step runs.

Model calls fall under this category, by the way. A frontier-model API call is an action against an external system, governed the same way any other HTTP egress would be: through a capability scoped to a vendor, a key, and an allow-listed endpoint. The supervisor wrapping that call sees every byte going in and out, and it can redact, log, or refuse. The reason this is worth flagging is that the runtime treats the LLM as one more system to govern. Other models, and other vendors, plug in the same way.

Triggering downstream workflows

Some steps do not produce an artifact and do not act on a system directly. They start more work somewhere else, sending their non-deterministic output to the be input of the next workflow. Kicking off a build pipeline. Routing to another agent. Spawning a subordinate plan. The capability grant is a workflow-trigger handle scoped to a target set, with constraints on rate, depth, and what kinds of intents it can spawn. Recursion is bounded by the runtime: a step cannot spawn unbounded children because the trigger capability says it cannot.

The three shapes cover most of what a coding agent actually does. Laying them out is useful because each shape maps cleanly onto a typed, scoped capability grant in the runtime. An agent never needs generic authority to act in the world. It needs a specific kind of grant for a specific kind of act, and the runtime enforces that distinction at the boundary.

What runs after the agent finishes

The pipeline I've described so far ends with a concrete artifact: a code change, a binary, a deployment manifest, or a service ready to ship.

Once the artifact is out in the world, it works in existing systems. It calls your existing APIs; it reads and writes against your existing databases. But it was not crafted by hand, and we need to be clear-eyed and intentional about what that means. While more and more code is generated by LLMs, do we fully trust the outputs of these workflows?

The author of intent still owns the outcomes of their authorship. Among other things, that means we're responsible for security and behavior under load. And that means we need a sandbox for the artifact itself.

The substrate for the artifact is the same substrate the agent workflow ran on. The artifact runs in the same kind of Wasm sandbox an agent step ran in. It holds capabilities for the systems it is allowed to reach, and nothing else. The principle of least authority that governed the artifact's creation governs the execution too.

In practice, the data center where your agent runs is probably not the data center where the produced artifact runs. The capabilities granted at planning time are not the capabilities granted at runtime. The two phases live on different infrastructure under different identities. What is not siloed is the unit of compute. A WebAssembly component is the unit of compute for an agent's step, for a tool invocation made along the way, and for the final artifact running in production. Same shape. Same boundary. Same audit trail.

AIOps, end to end

With that thread held, the rest of the picture follows.

Most of the gates that matter in an AIOps pipeline are automated rather than manual. The quality attributes that we desire in software are enforced as a part of the process of software construction. Policy is encoded. Capability grants are encoded. Budgets are encoded. The human in the loop approves the encoded version, and from there the pipeline runs without a person holding it open at every step. If user attention is required at a particular gate, this is surfaced as an exception; everything else goes into the audit trail. That is the only way you can run thousands of autonomous workers without governance becoming the bottleneck.

From there, it should be easy to picture how AIOps dovetails with continuous delivery, with AI agent access control at every step.

Imagine a workflow that releases its next version, runs as a canary against live traffic, and watches the metrics. The infrastructure does not make an A/B cut. It performs a percentage-based rollout. If the metrics signal degradation, the rollout halts and rolls back automatically. The result is reported back to the ticket the work originated from, with the audit trail attached.

Every step is reviewable after the fact. Every step is bounded by what the platform was allowed to do. The human in the loop reviewed the intent and the plan, and the audit trail closes the gap on everything else.

That is the kind of process you can achieve with an AIOps cycle. The work consists in wiring intent capture, planning, scheduling, sandboxed execution, and observability into a single pipeline that engineers can drive without leaving the platform in which they author intent.

We are drawing the map

WebAssembly components are OCI artifacts. They distribute through the registries you already operate. They run on a control plane that orchestrates sandboxes the same way Kubernetes orchestrates pods, with the sandbox boundary expressed as a typed capability grant.

We have built that control plane. It is called Cosmonic Control, and it is the operational substrate underneath the AIOps vision described above. You can try it out for free.

Cosmonic Control changes what is possible at the infrastructure layer. Components start in microseconds. There are no cold starts. Components are small enough that a single node can hold thousands of them, so density stops being a tradeoff against isolation. Every component carries its own typed capability boundary, so isolation stops being something you bolt on after the fact. There is no ambient authority anywhere in the system, by construction.

Put together, that is a runtime for ultra-dense, capability-bounded functions running at massive scale. It is what you need if you want to give every prompt, every plan, every pipeline step, and every produced artifact its own private sandbox without paying for a container per request.

It is also what lets you move quickly: spinning up bounded execution for thousands of autonomous workers in parallel, without governance, infrastructure cost, or cold-start latency becoming the bottleneck.

Read our free whitepaper, Securing the Vibe-Coded Enterprise, to learn how Cosmonic Control enables your teams to vibe code at full speed while enforcing deny-by-default execution, the principle of least authority, and zero-trust isolation across every phase of the AI software development life cycle, from prompt to production.

Every existing governance and security control system you already run, from policy bundles to identity to attestation to audit sinks, slots in over the same substrate. Nothing is asked of you that you do not already have a place for. The substrate just stops fighting your controls and starts expressing them.

The componentized world is not a thought experiment. It is the most direct path to AI agent security security and running autonomous coding work safely at scale, and it is the only path I have seen that does not require the cartographer to keep redrawing the map.